by Charles River Interactive | October 1, 2015 | Organic Search, Search Engine Marketing, SEO, SEO Industry Trends, Uncategorized

We’ve done it. You have done it. So have a lot of other people we all know.

What is it?

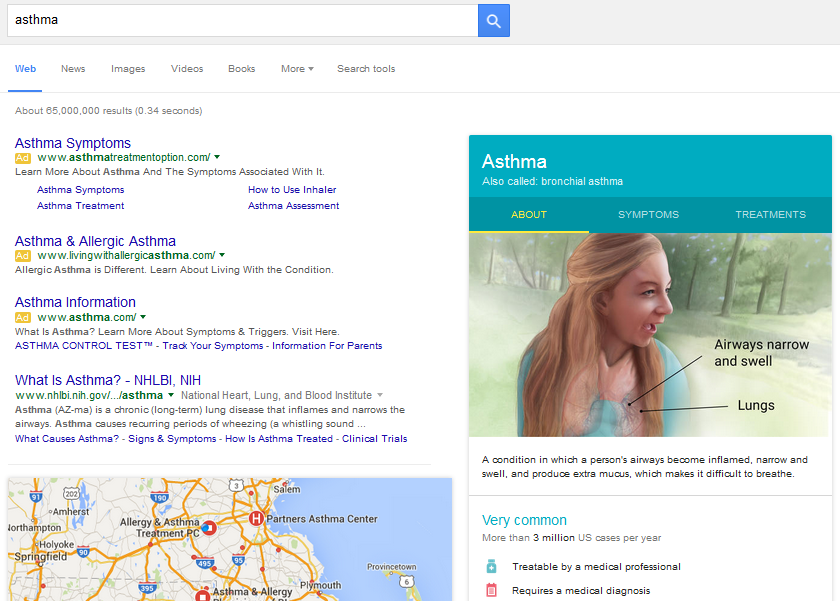

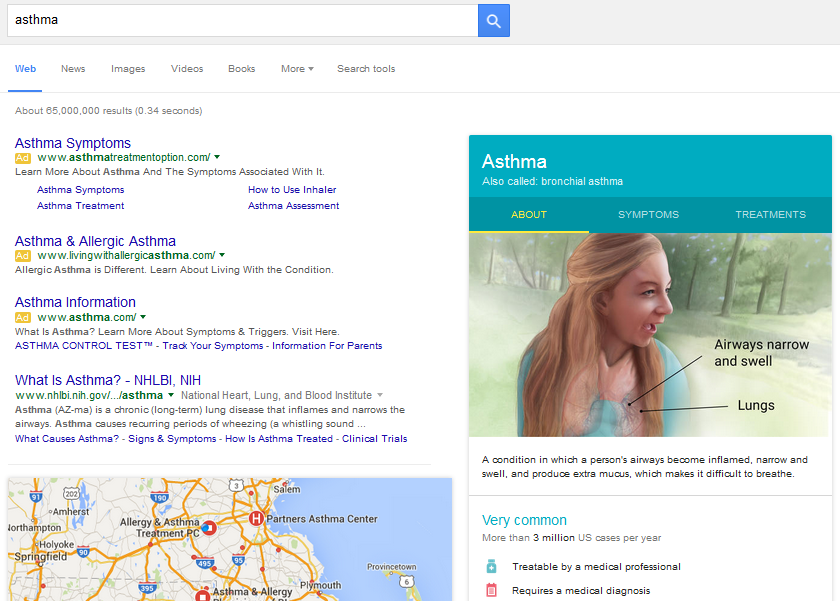

Searched for information about a health condition online. As of February 2015, Google made it easier to find this type of information with a knowledge graph containing details for more than 400 medical conditions. And in early September, they more than doubled the number of conditions and enhanced the visual appearance of the health conditions knowledge graph, and added a downloadable PDF with the information. So now, when you search for a common condition such as “asthma”, you will see a page that looks like this:

I’m sure you’re thinking, “This is great – I get information about the condition, including symptoms and treatments. I don’t see any problems.” The problem is what if you are a hospital or medical facility with an asthma treatment program, and you have just spent time optimizing your web pages to rank in one of the top positions for the term? Now you are not only competing with health information sites such as WebMD as well as other hospitals, but you need to drag the searcher’s attention away from the bold visual.

All is not hopeless with this development. There are opportunities for hospitals and health care providers, including:

- Users that will scroll past the knowledge graph to organic results are likely to be more qualified leads. Students and casual browsers who are simply looking for definitions and general information will have no need to look further. Patients and families truly looking for care for a condition will be seeking additional information.

- Long-tail queries are (at least for now) not displaying the knowledge graph. So although phrases such as “exercise induced asthma” and “pediatric asthma” have less search volume than the broad term “asthma”, organic search results have better visibility and thus better click-throughs.

Beyond this, the question that remains for hospitals and healthcare providers is whether there is any benefit for them in maintaining pages on their site about medical conditions. For users that are seeking care for a condition, there is still value in gaining a ranking position in that space as the knowledge graph does not provide direction for treatment. Bottom line – perhaps there is a silver lining in the knowledge graph in allowing hospitals to do what they do best, provide treatment.

by admin | June 27, 2014 | Local SEO, Paid Search, Search Engine Marketing, SEO, Uncategorized

Since its launch in 2011, there has been reluctance among many marketers and non-marketers to embrace Google+ as a preferred social platform. Any mention of Google+ is usually met with an eye roll and the common (often-rhetorical) question: ‘Who even uses Google+?’ What has been lost on some, however, has become an advantage for others, considering the Google-owned social network functions hand-in-hand with local search and organic search display.

You can find local search influence in the Google Carousel, Google Maps, and on mobile devices – all three pull from Google+ and local search.

Google+ commands 300 million users and influences 43% of all Google searches with local query intent. Since the early years of Google’s social network, there have been several updates to increase ease of use. Previously known as Google Places, which became Google+ Local, the platform evolved last week to become Google My Business. The recent launch of Google My Business has changed the way we utilize Google products through the successful integration of social, search, and maps, all of which provide a better experience for customers worldwide (Google My Business is available in 236 countries and 65 languages).

Two of the most important updates in Google+ evolution are the improved user experience and cleaner dashboard operating system, as Local SEO experts have been bemoaning both for years. Google took notice and delivered a strong solution with Google My Business.

Google My Business Updates – in plain English

(more…)

by admin | December 9, 2013 | Organic Search, SEO, Uncategorized

With a majority of web traffic coming from Google, many website owners tend to forget that Bing and Yahoo often round out that list in second and third place. While these numbers don’t (and maybe never will) match the traffic from Google, it’s important to keep in mind what’s on Bing’s radar.

Unlike Google, Bing does not officially announce algorithm updates. However, they do announce new features, such as the deep links directly in the search box, as seen below. This feature is similar to Google sitelinks, however Bing displays these results without even having to click the search button.

(more…)

by admin | November 25, 2013 | Organic Search, SEO

Although Schema.org was introduced several years back, structured data markup only started to gain great popularity with the rollout of Google’s Data Highlighter Tool late 2012/early 2013. The Data Highlighter tool makes it easy for non-technical people and people who don’t have direct access to their website CMS to easily markup their data for better search result visibility and rich snippet display. However, there are still many organizations not capitalizing on this opportunity because they are unsure about the benefits or what to do.

What are the Benefits of Schema, Structured Data, Markup, etc.?

Structured data provides search engines with rich snippets, which give the viewer relevant information before they even click on a result.

Is Marking Up Data Worth My Time?

In short, yes. The Data Highlighter was created to help Google learn about your website (vs. other search engines); therefore, markup created through Data Highlighter does not carry over through other search engines. For this reason – if resources allow — adding the Schema code directly to your website may be a better option as it is supported by Google, Bing, Yandex, and Yahoo.

That being said, if you do not have these capabilities or are not looking to spend a great deal of time marking up pages, the Data Highlighter tool can still be of great benefit to your website and your organic traffic. Tagging pages only requires you to highlight the phrase and select a tag. Google does the rest.

What Should I Mark Up?

Anything you think would be helpful! At the very minimum, it is recommended to markup your business details, such as phone number, address, hours, etc. The rest is dependent on your business. If you sell products, you can use Schema markup for pricing and reviews. If your business has events, markup the location, dates, and times. The more you markup, the easier it is for search engines to figure out your site structure.

Do I need to mark up EVERY page?

With Data Highlighter, you can tag a group of pages. When Google starts becoming more familiar with your website, it will attempt to automatically tag similar pages for you, although it is generally recommended to highlight and tag as much as you can for better accuracy. Keep in mind, just because you markup every page does not necessarily mean Google and other search engines will always show it. Often times, only the first few results will display rich snippets.

Helpful Tools for Marking up Content

Google offers a helpful Structured Data Tool Helper for more advanced users who wish to embed the structured data right onto their webpages. This feature works similar to Data Highlighter; however, instead of directly publishing you are given the HTML code with the markup to load to your website. You can check how Google sees your site by using the Structured Data Testing Tool. The Schema.org website also lists available markups and how to get started.

by Charles River Interactive | October 16, 2013 | Organic Search, SEO, Uncategorized

The SEO world is buzzing over the loss of organic keyword data from Google. While it does change *some* of SEO, it’s important to remember that SEO isn’t only about keywords. In fact, there is still a LOT of data out there, including quite a bit of referring keyword data and proxies. SEO, like any industry, is always evolving and changing so, take heart! This does not spell the end of SEO. Read on for why.

Not all keyword data is gone.

Although most websites receive the majority of their traffic from Google, other search engines like Bing and Yahoo follow their own rules. The 20-30% or so of traffic from these and other search engines does have valuable nuggets of search data in it. There are also other keyword research tools available including Google Trends, Webmaster Tools, AdWords, and, although you might have heard that keyword rankings are dead (viva la keyword rankings), the data is still very useful to benchmark which keywords you already rank for and which areas need improvement.

SEO goes beyond Keyword data

SEO is not only about keywords! If search engines can’t “see” or “read” a website because of broken links, improper redirects, navigation issues, and site speed issues – it’s likely the site won’t rank well in organic search results. Even the best, most beautiful website in the world won’t convert if no one can find it!

There are other cool SEO tools including new(ish)-to-the-scene techniques like Schema.org and the Data Highlighter Tool in Webmaster Tools – both of which make your search results more interesting, encouraging CTR. Also, Authorship, Places/Local, Video, Images, you get the idea – basically the kitchen sink that shows up in most search engines’ SERPs. These are all valuable tools in the SEO arsenal just begging for optimization.

And, who could forget Social Signals (yes, with 2 capital S’s J). While social media posts that are set to private or with limited sharing don’t show up in search results, being active and participatory in social profiles boosts your online presence, and it’s been said that ~10% of Google’s algorithm takes these signals into account.

And last (but certainly not least) is CONTENT. We still have all kinds of data about organic searcher behavior and interaction with a site’s content that is very useful for benchmarking successes and informing future initiatives.

Conclusion

Be active, be fresh, be smart. Over the past 5 years or so, SEO has been moving much faster toward a more ‘natural’ approach – the days of link buying and stuffing pages with keywords are gone. If you’re on top of keeping your website code clean, are active with social media, and create fresh, properly tagged content people (and search engines) will take notice.

by Charles River Interactive | October 2, 2013 | Organic Search, SEO, Uncategorized

The search engine landscape is *constantly* evolving – and the past month has been a big one for major changes. On the heels of last week’s big news that Google will be moving all of its organic search keyword data into the (not available) bucket, Google Senior VP Amit Singhal announced that the search engine giant has been rolling out the “Hummingbird” index update. This story has been picked up even by many mainstream news providers, but what does it all mean?

Google Index Basics

The last time Google made a significant change to its search index was with 2010’s Caffeine, which provided “50 percent fresher results for web searches than (the) last index.” This update confirmed what SEOs had been encouraging clients to pursue for some time – blogging and other “fresh” content initiatives like video, news and real-time updates were going to become critical to getting and keeping organic search rankings.

Hummingbird is similar in that it’s not a small algorithm change – there are plenty of those – but a completely new algorithm – a change to the index itself. Before Caffeine, Google’s index had “several layers, some of which were refreshed at a faster rate than others; the main layer would update every couple of weeks. To refresh a layer of the old index, Google would analyze the entire web.” This is why it used to take much longer for SEO strategies to take effect – websites just weren’t hit as often.

(more…)